GenAI apps expose a wider attack surface than traditional web applications, and most teams are still trying to secure them with tools built for a different era. What used to be a well-defined application boundary now includes LLMs, prompt flows, external integrations, and AI-generated code that can change behavior at runtime.

This shift introduces risks that don’t map cleanly to traditional testing. Prompt injection, sensitive data exposure, insecure AI-generated code, and unpredictable model behavior are becoming part of the standard threat landscape. Traditional tools still focus on known vulnerabilities, but they fall short when it comes to LLM security testing and broader generative AI application security needs.

To keep up, teams are adopting GenAI AppSec tools that cover different layers of the stack, from application and API security to model-level validation and continuous GenAI vulnerability testing. In this blog we’ll break down eight such tools and where they fit in a modern AI AppSec strategy.

What makes GenAI app security different

GenAI apps introduce non-deterministic behavior that traditional AppSec workflows aren’t designed for. Outputs vary based on context, inputs are prompt-driven, and attackers can exploit this through prompt injection, model manipulation, and data leakage via responses. Unlike structured inputs in traditional systems, natural language prompts create a new class of vulnerabilities that are harder to standardize and test. Add LLM API call chains and autonomous actions into the mix, and a single input can trigger multiple downstream effects that are difficult to predict or trace.

There’s also a visibility problem. Model decision-making is often difficult to interpret, and outputs are unstructured, which makes it harder for traditional tools to detect sensitive data exposure or malicious behavior. AI-generated code adds another layer, introducing inherited vulnerabilities earlier in the pipeline.

At the same time, the application and API layer hasn’t changed. REST endpoints, authenticated flows, and business logic still carry the same risks as any modern web app.

GenAI AppSec is a multi-layer problem. You need coverage at both the model layer and the application layer. Most teams today are only covering one.

How to read this list

The tools in this list don’t solve the same problem. They cover different parts of the generative AI application security stack, and comparing them directly without context can be misleading.

Broadly, these tools fall into three layers:

Application and API layer

This is the surface your users interact with web apps, API endpoints, and authenticated flows. It includes business logic and integrations, and still carries all the standard web application risks.

Model and prompt layer

This is where the LLM receives and processes inputs. Risks here include prompt injection, data leakage, and unsafe or manipulated outputs areas traditional AppSec tools don’t cover.

Code pipeline layer

This covers how AI-generated or AI-assisted code is written and shipped. As AI coding tools become more common, risks shift earlier into the development lifecycle.

A team building a GenAI product will typically need coverage across all three. The goal here is not to compare tools directly, but to understand how they map to different parts of your attack surface.

Top GenAI AppSec tools

This list highlights the most relevant GenAI AppSec tools in 2026, covering different parts of the modern AI attack surface from application and API security to model-layer testing and code pipeline protection. Each one addresses a specific layer of generative AI application security, and most teams will need a combination to achieve meaningful coverage.

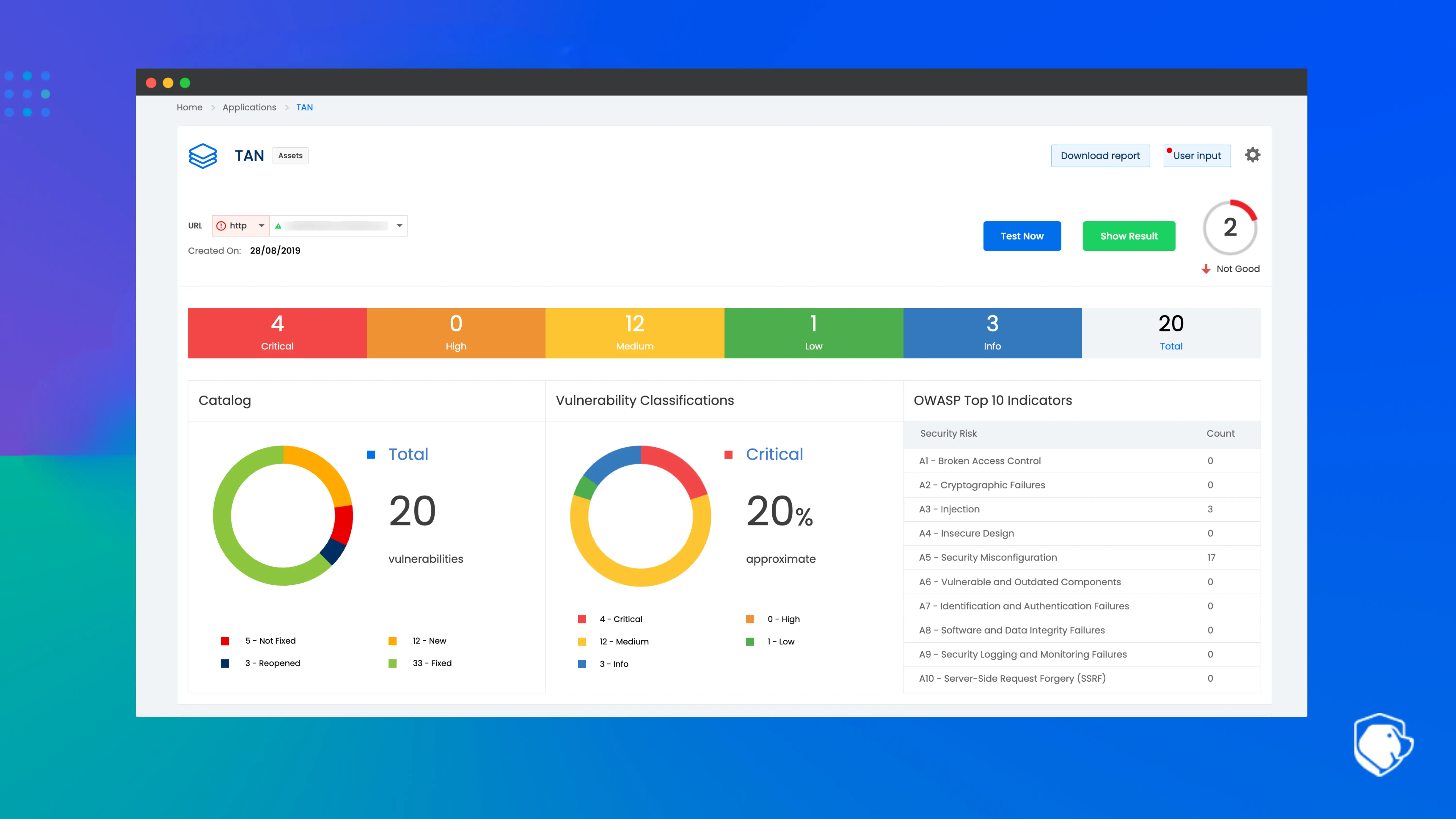

1. Beagle Security

Position : Application and API layer

Agentic AI pentesting for web applications and APIs including the REST and GraphQL endpoints that GenAI-powered products expose. Tests authenticated flows via a login recorder, runs business logic testing, and integrates into CI/CD pipelines. Uses in-house AI for attack simulation with no third-party LLM APIs involved.

Best for: Teams building GenAI-powered web apps or APIs who need visibility into real application-layer risks, especially in authenticated environments.

Trade-off: Focused on the application and API layer. It does not cover model-layer risks like prompt injection, so it needs to be paired with LLM-focused tools.

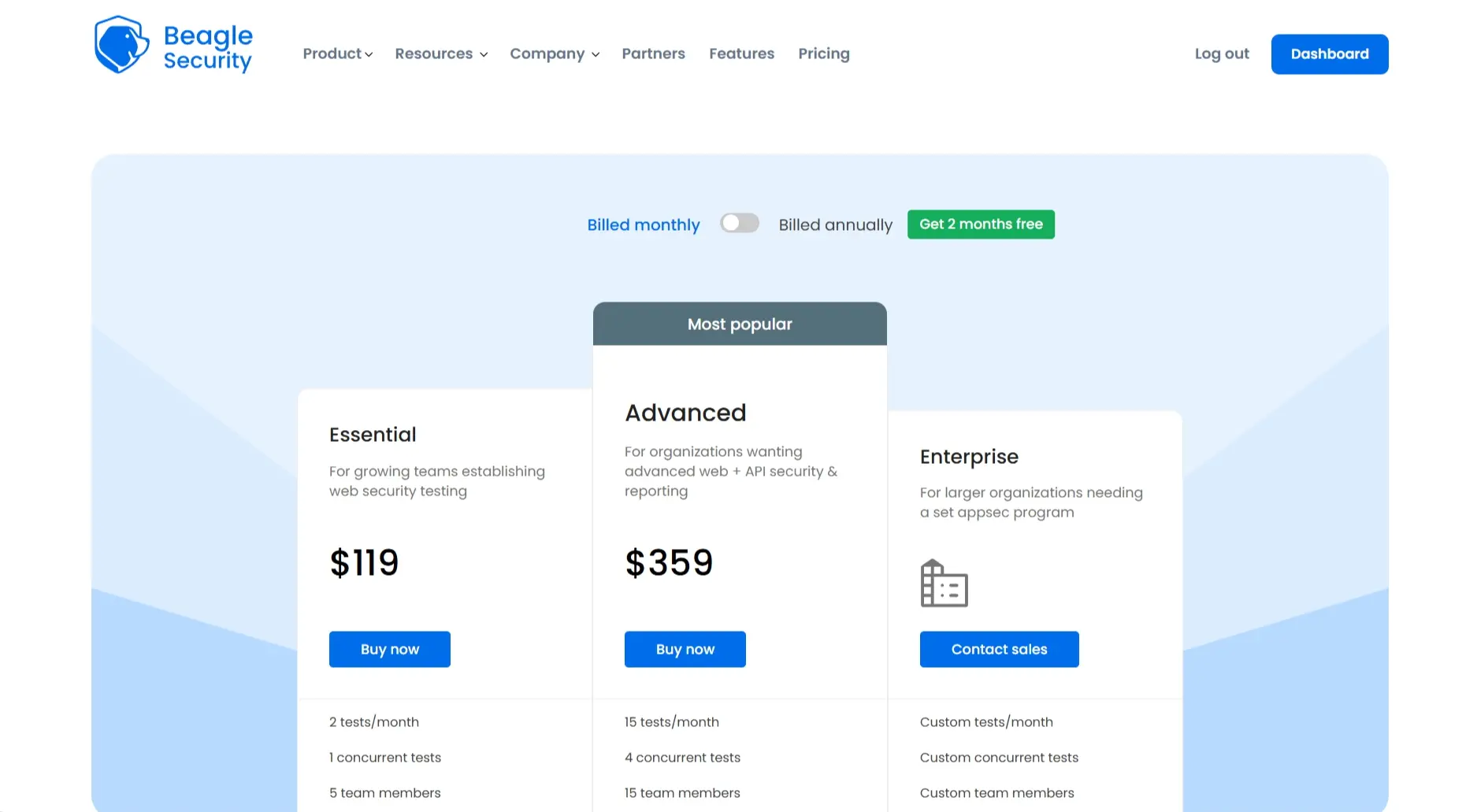

Pricing:

A comprehensive pricing list is available.

A free 14-day trial is available, with paid plans starting at $119/month.

Advanced and customized Enterprise plans are also offered.

Ratings & reviews

Beagle Security is highly-rated with a rating of 4.7/5 on G2. It is praised for its ease of use, detailed reporting, and cost-effectiveness, making it popular for both developers and non-technical business owners seeking proactive, scheduled security testing.

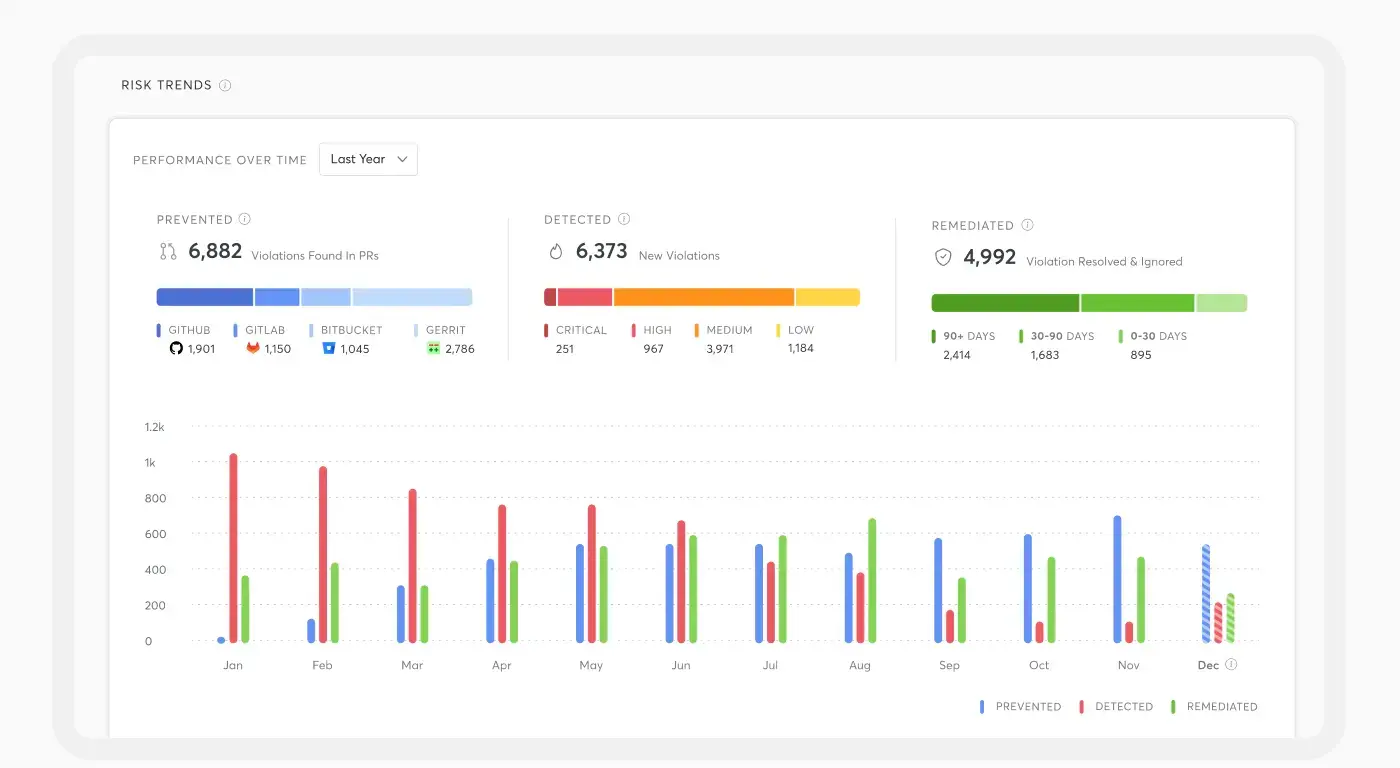

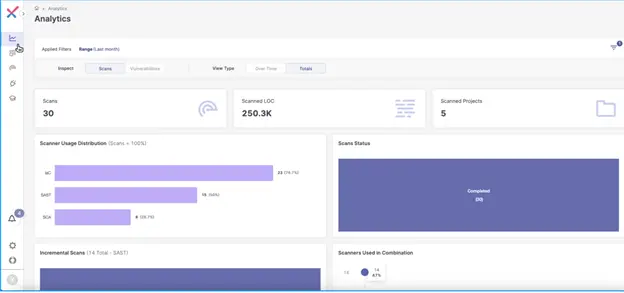

2. Cycode

Position : Code pipeline layer

Cycode is an ASPM platform that provides centralized visibility into security risks across the development lifecycle, including those introduced by AI-generated code. It identifies hardcoded secrets, insecure dependencies, and supply chain vulnerabilities, consolidating signals across repositories, CI/CD pipelines, and developer tools into a unified risk view.

Best for: AppSec teams that need centralized visibility into how GenAI is being used across codebases and the risks it introduces at scale.

Trade-off: Broad ASPM coverage means depth at individual layers may be limited compared to specialized point solutions.

Pricing

Subscription-based, typically structured around the number of monitored developers and the specific platform features required (annual contracts).

Estimated cost: Data indicates a price point of approximately $360 per monitored developer annually.

Ratings & reviews

Cycode holds a 4/5 rating on G2, with users highlighting its quick setup, ease of integration, and strong visibility into risks like exposed secrets and data leakage.

3. Checkmarx One Assist

Position : Code pipeline layer

Checkmarx One Assist extends traditional SAST and SCA capabilities with AI-assisted remediation. It detects insecure patterns introduced by AI coding assistants and provides contextual fix recommendations directly within developer workflows, reducing friction in fixing vulnerabilities in AI-generated code.

Best for: Enterprises already using Checkmarx who want to adapt existing SAST workflows to handle the increase in AI-generated code.

Trade-off: Primarily focused on static analysis. It does not test runtime application behavior or API-level vulnerabilities.

Pricing:

According to AWS Marketplace listings for 12-month contracts, Checkmarx One Essential lists for roughly $1,564 per user per year.

Ratings & reviews

Checkmarx carries a strong 4.2/5 rating on G2, driven by user praise for its highly accurate and configurable SAST engine. Many reviewers say Checkmarx stands out when scanning large or complex codebases, where its customizable rules help reduce false positives while uncovering deeply rooted issues. Some reviews also mention that teams get the most from Checkmarx when a dedicated AppSec engineer fine-tunes configurations.

4. SplxAI

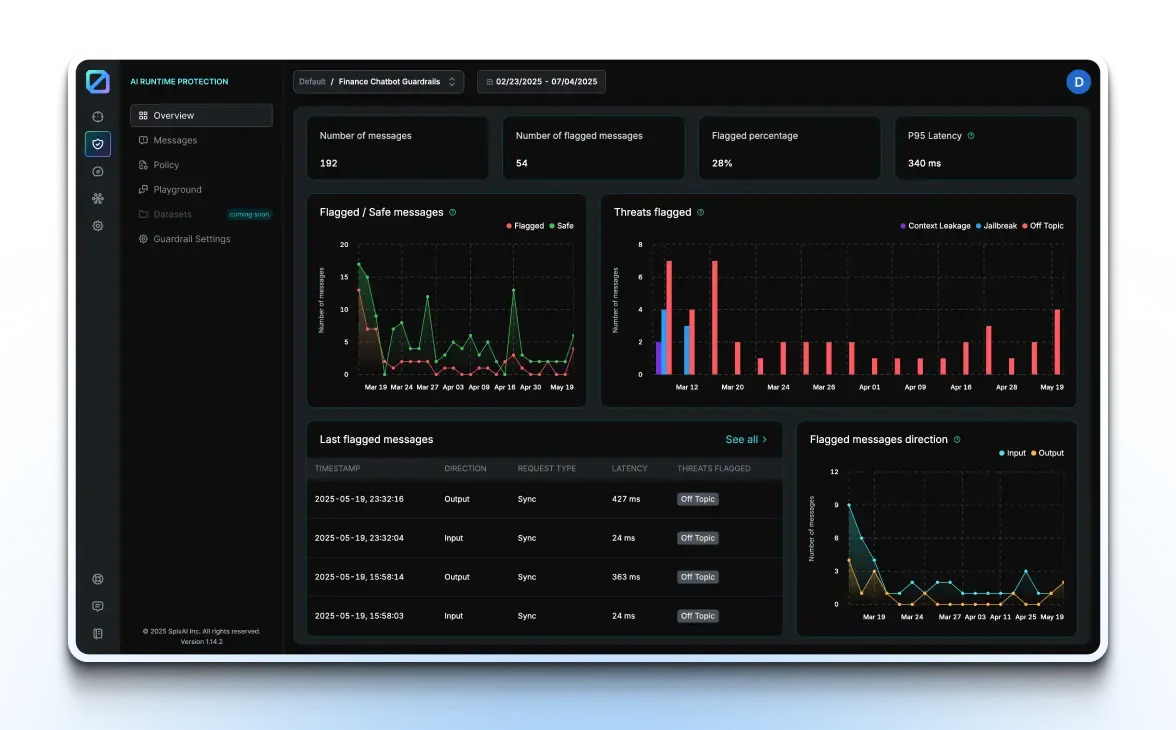

Position: Model and prompt layer

SplxAI is purpose-built for LLM security testing, focusing on how GenAI applications behave under adversarial conditions. It simulates attacks such as prompt injection, jailbreaking, and data leakage, and generates structured reports that highlight model-layer risks and failure modes.

Best for: Teams building LLM-powered applications that need dedicated validation of model behavior beyond traditional AppSec tools.

Trade-off: Limited to the model and prompt layer. It does not provide visibility into application or API vulnerabilities.

Pricing

According to AWS Marketplace listings, SplxAI pricing starts at around $20,000 per year and scales to approximately $50,000 annually for enterprise plans.

Ratings & reviews

SPLX.ai holds a 5/5 rating on Gartner Peer Insights, with users highlighting its strong technical expertise, proactive support, and effective integration of AI red teaming into CI/CD workflows.

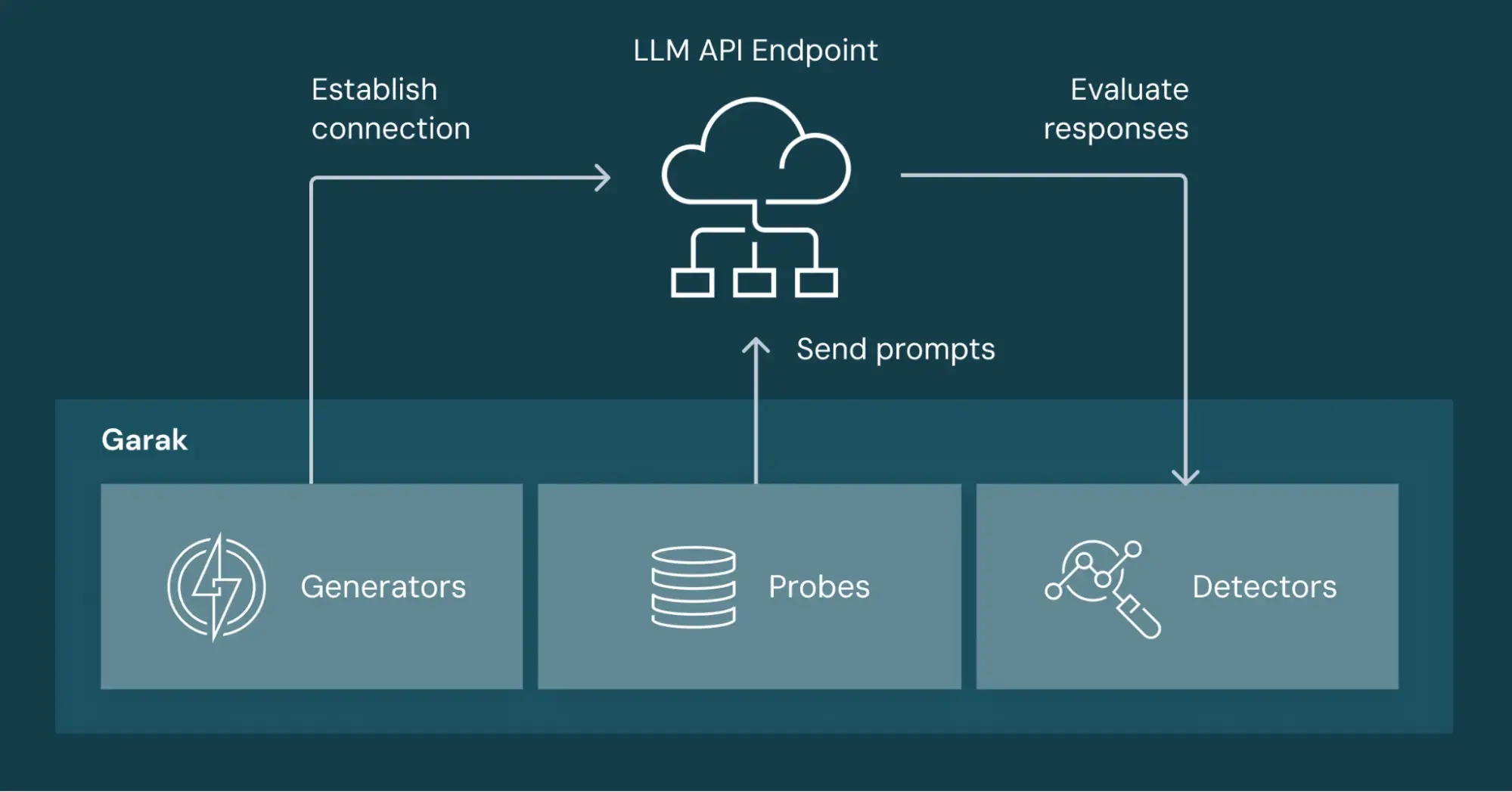

5. Garak

Position: Model and prompt layer

Source:__databricks

Garak is an open-source LLM vulnerability scanner developed by NVIDIA. It probes models for failure modes such as hallucination, prompt injection, toxicity, and unintended data exposure. Its extensibility allows teams to customize tests and run deeper evaluations on model behavior.

Best for: Security teams and researchers working with custom or self-hosted models who need flexible, in-depth LLM testing.

Trade-off: Requires technical setup and expertise. Not designed as a plug-and-play enterprise tool.

Pricing

License: Open-source (Free).

Cost Factor: While the tool is free, running comprehensive scans on commercial LLMs (e.g., GPT-4) can incur API token costs.

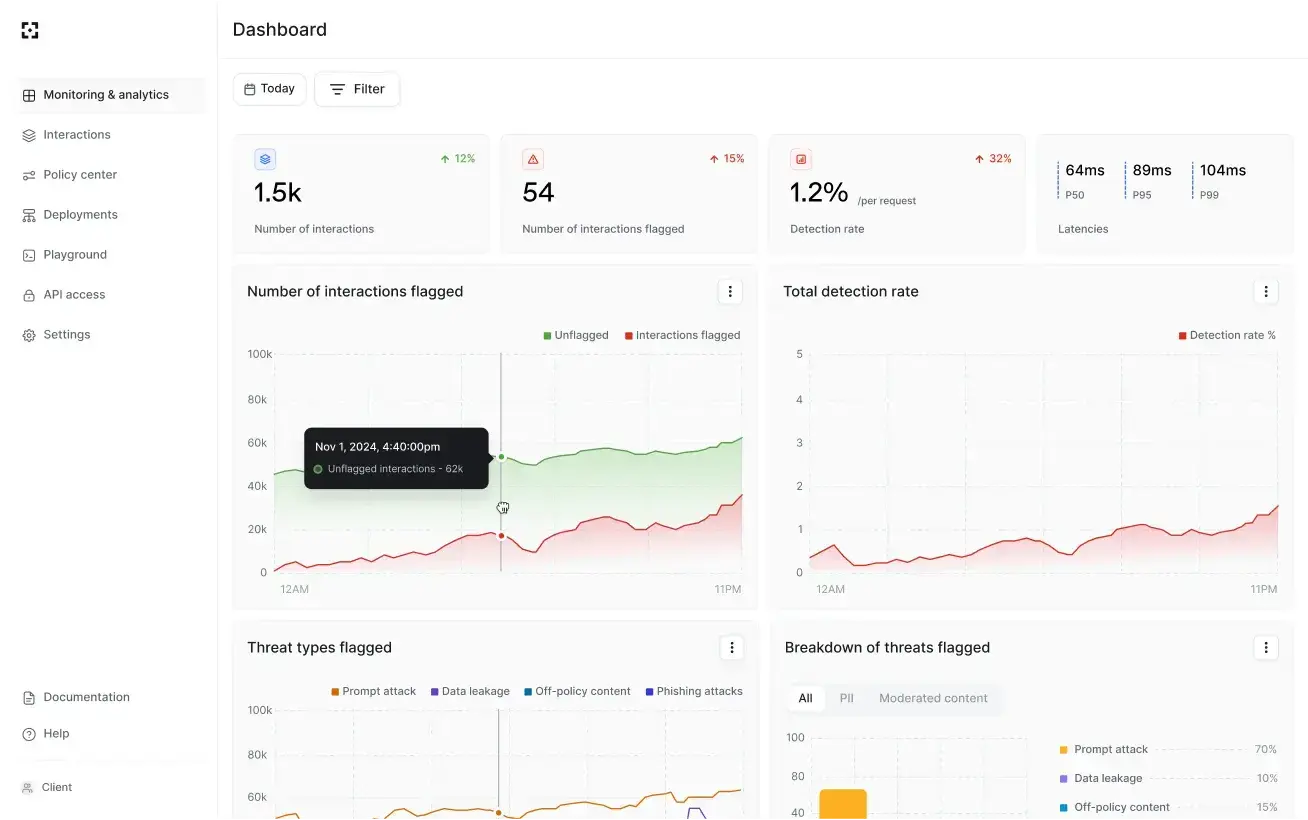

6. Lakera Guard

Position: Model and prompt layer

Lakera Guard provides real-time protection against prompt injection and unsafe inputs. It sits between user input and the LLM, enforcing policies and blocking malicious or non-compliant prompts before they reach the model, helping reduce the risk of data leakage and unsafe outputs.

Best for: Teams running production GenAI applications that need runtime protection at the model interaction layer.

Trade-off: Focused on runtime defense. It complements testing tools but does not replace proactive security validation.

Pricing

According to industry analysis and vendor positioning, Lakera Guard follows a quote-based pricing model, with free trial access and custom enterprise plans based on usage volume, features, and deployment type.

Ratings & reviews

Lakera Guard currently holds a 5/5 rating on G2, with early users highlighting its effectiveness in protecting against AI-driven threats, though some note limited customization options and higher cost.

7. Snyk DeepCode AI

Position: Code pipeline layer

Snyk DeepCode AI identifies vulnerabilities in code, including code written or assisted by AI tools, and provides fix suggestions directly within developer workflows. It combines SAST, SCA, and container security, helping teams detect and remediate issues early in the development cycle.

Best for: Developer-first teams aiming to secure AI-assisted coding practices without slowing down release velocity.

Trade-off: AI-generated fix suggestions require developer validation and should not be applied without review.

Pricing

Free tier available

Paid plans start around $25/month for teams

Enterprise: Custom quote

Ratings and reviews

Snyk holds a strong 4.5/5 rating on G2, with users highlighting its ease of use, seamless CI/CD integration, and actionable remediation guidance that helps developers quickly fix vulnerabilities. However, some reviewers note a relatively higher rate of false positives in its detection results.

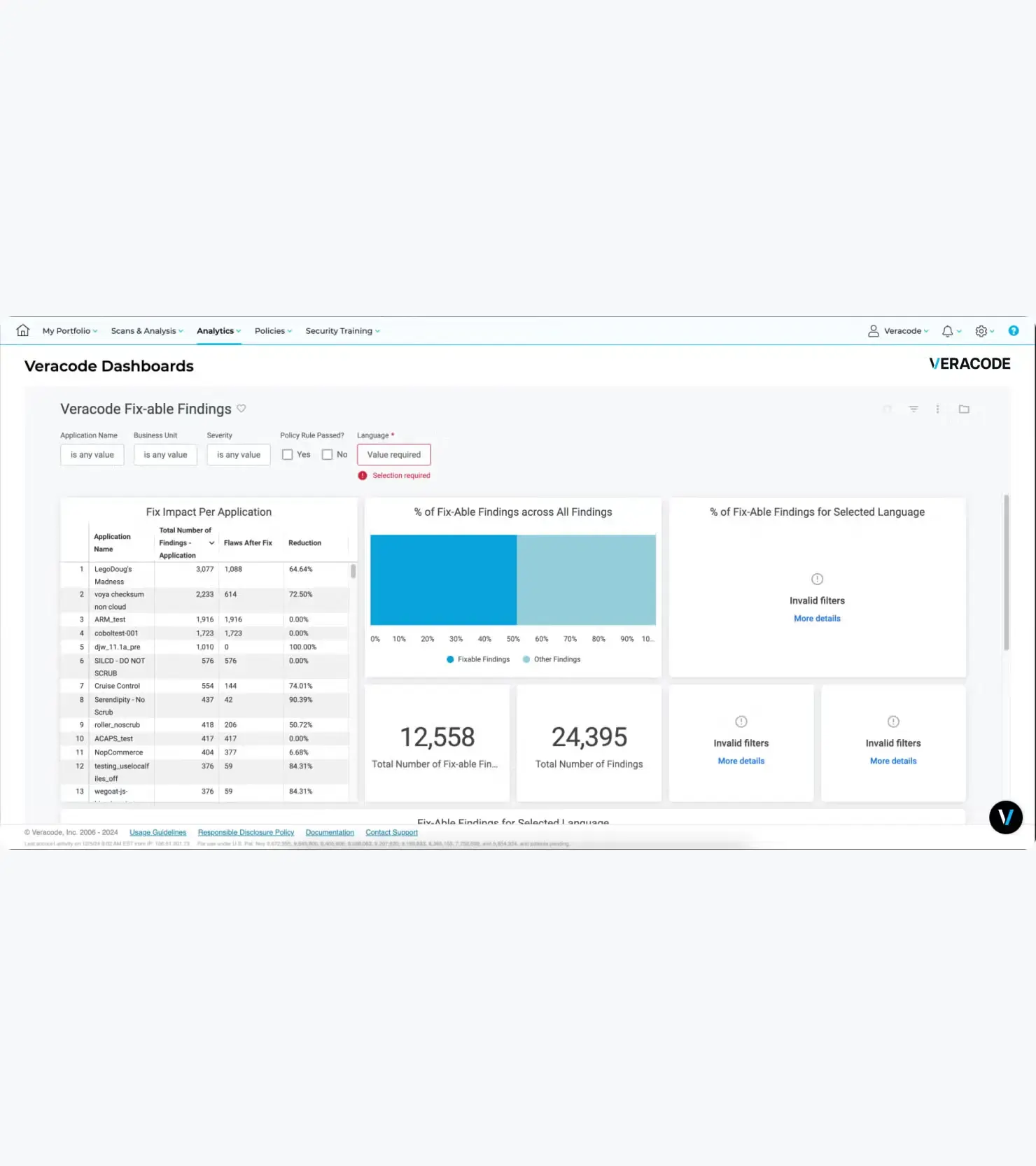

8. Veracode Fix

Position: Code pipeline layer

Veracode Fix adds an AI-powered remediation layer on top of Veracode’s SAST findings. It suggests and applies fixes for vulnerabilities, including those introduced by AI-generated code, helping reduce remediation backlog and speed up resolution.

Best for: Teams already using Veracode who want to accelerate vulnerability remediation using AI-assisted fixes.

Trade-off: Works best for well-defined vulnerability patterns. Complex or context-specific issues still require manual review.

Pricing

- Quote-based depending on modules and scale. Based on public values, pricing starts approximately $15000/year for basic solutions and can go beyond $100000 for full enterprise suites.

Ratings & reviews

Veracode holds a 3.8/5 rating on G2, with users appreciating its strong integration with development tools and detailed security reporting that helps streamline vulnerability identification, though some note slower scan times that can impact development workflows.

Building your GenAI AppSec coverage map

The goal isn’t to pick the best tool on this list. It’s to identify which layers your current stack leaves exposed. Most GenAI AppSec tools solve for a specific part of the problem, which is why gaps tend to show up between layers, not within them.

Here’s what a gap at each layer looks like, and which tools address it:

Application and API layer gap:

Your GenAI product exposes web endpoints and APIs. If you’re not running authenticated penetration testing against those surfaces, including the APIs that interface with your LLM you’re relying on assumptions, not validation. This is where issues like broken access control, logic abuse, and integration flaws show up. Beagle Security covers this layer directly, including authenticated scanning and business logic testing.

Model and prompt layer gap:

If you’re not testing how your LLM responds to adversarial inputs, you’re shipping blind. Prompt injection, jailbreaks, and indirect data leakage won’t show up in traditional scans. SplxAI, Garak, and Lakera Guard each address this layer differently through adversarial testing, model probing, and runtime protection. The rise of vibe hacking makes this layer increasingly critical.

Code pipeline layer gap:

AI-generated code is moving faster than manual review can keep up with. Without controls here, insecure patterns, exposed secrets, and vulnerable dependencies can reach production unchecked. Cycode, Checkmarx, Snyk, and Veracode Fix address this layer at different points from ASPM visibility to in-IDE remediation and automated fixes.

| Tools | APP & API | Model and prompt | Code pipeline |

|---|---|---|---|

| Beagle security | ✔️ | ||

| Cycode | ✔️ | ||

| Checkmarx One Assist | ✔️ | ||

| SPlxAI | ✔️ | ||

| Garak | ✔️ | ||

| Lakera Guard | ✔️ | ||

| Snyk DeepCode | ✔️ | ||

| Veracode Fix | ✔️ |

No tool spans all three layers. A minimal but credible GenAI AppSec stack typically needs at least one tool per layer with the application and API layer often being the most overlooked, since it’s the surface that’s been there longest and is easiest to assume is covered.

What to look for as this space evolves

The GenAI AppSec tooling landscape is moving fast. Established platforms like Checkmarx, Snyk, and Veracode are adding GenAI-specific capabilities into their existing workflows, while purpose-built tools like SplxAI, Lakera, and Garak are maturing quickly and closing the gap between research-grade testing and production readiness.

What’s less likely to change is the structure of the problem. Application security, model security, and pipeline security will continue to operate as distinct layers, even as vendors try to expand across them. The next generation of GenAI AppSec tools may offer broader coverage, but in practice, teams will still need to think in terms of layered security rather than a single solution.

What to watch for is convergence with depth. Expect tighter integration between DAST tools and LLM endpoint discovery, more mature adversarial testing frameworks for agentic systems, and increasing regulatory pressure on generative AI application security. That pressure will push teams to treat coverage not just as a best practice, but as a requirement.

If you’re starting somewhere, start with the layer you expose the most.

Test the application and API layer of your GenAI-powered product. Start your 14-day free trialor explore the interactive demo to see if Beagle Security is the right fit for you.

FAQs

What are GenAI AppSec tools?

GenAI AppSec tools are security solutions designed to identify and mitigate risks in applications that use generative AI. They cover multiple layers, including application and API security, LLM security testing, and securing AI-generated code within development pipelines.

Do I need separate tools for AI security and application security?

In most cases, yes. GenAI applications introduce risks across three distinct layers: application and API, model and prompt, and code pipeline. However, no single tool provides deep coverage across all three. Most teams use a combination: an application-layer testing tool, a model-layer testing solution, and a pipeline security tool to manage AI-generated code risks.

What is prompt injection and how do you test for it?

Prompt injection is an attack where malicious input manipulates an LLM into ignoring its instructions, leaking data, or triggering unintended actions. Testing requires model-layer tools like SplxAI or Garak that generate adversarial inputs and evaluate responses. Runtime solutions like Lakera Guard help detect and block these attacks in production. Traditional tools were not designed for this class of vulnerability.